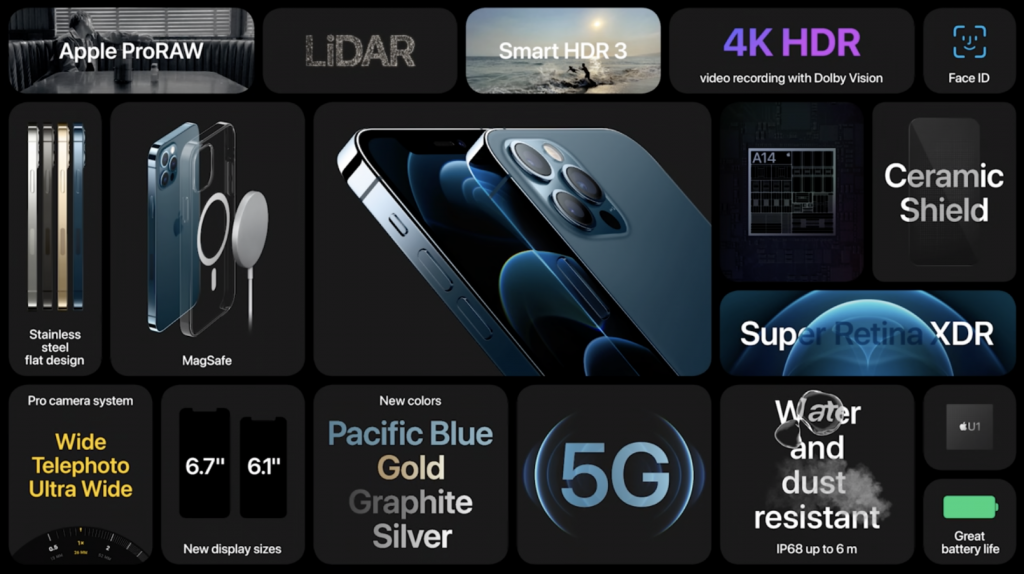

It’s iPhone 12 day! A lot was announced today, from tiny HomePods to new iPhones and magnetic accessories, but as we make one of the more well known camera apps on iOS, we really cared about one thing:

What’s new in iPhone photography?

Here at Halide HQ (Halide HQ in COVID 2020 being, all three of us at home) we were reeling with the amount of information dropped on us. Here’s a very quick summary.

You can also read our initial impressions on Twitter, of course:

Even though most iPhone users will not likely be upgrading from an iPhone 11, we’ll use that phone from last year as our baseline to see what is new in iPhone camera hardware-land.

Note that most major improvements in photography on phones come in software nowadays; we usually expect to see minor changes in hardware. Evolution, not revolution.

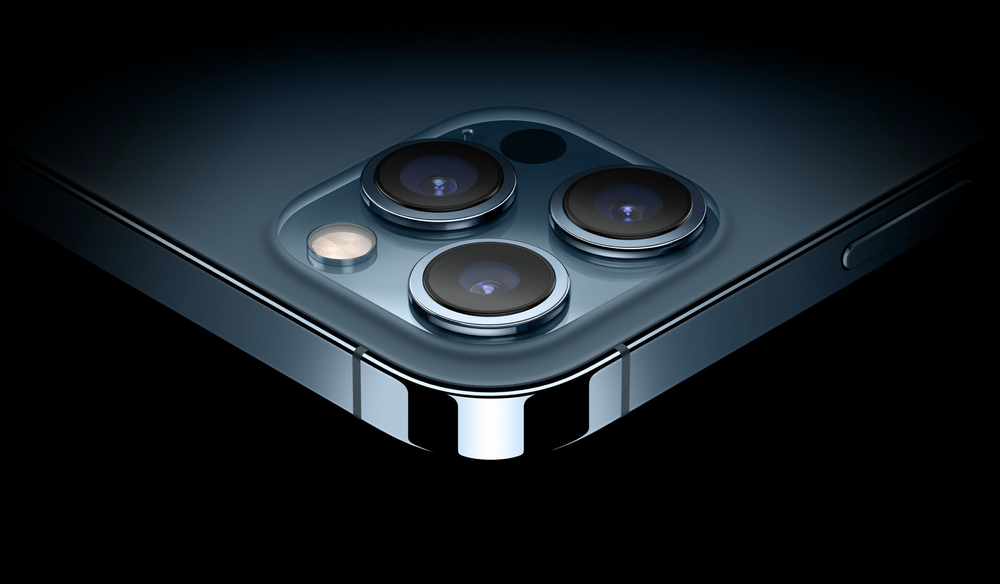

Let’s start with what’s unchanged: On the iPhone 12, 12 mini and 12 Pro (non-Max), the sensor on the main (or “wide”) and ultra-wide cameras is unchanged. The 11 Pro and 12 Pro will both still come with the same telephoto lens and sensor as well.

That’s where similarities end. That main wide camera gets a new lens, which according to Apple lets in 27% more light thanks to its faster (that is, bigger) ƒ1.6 aperture (previously ƒ1.8) and sharper 7-element construction.

There is some mention of sharpness improvements on the ultra-wide lens, but we’re unsure if this is a software correction improvement or a new lens design — or both. The images certainly look sharper.

But if you like large phones, this is your year. The iPhone 12 Pro Max has the real goods.

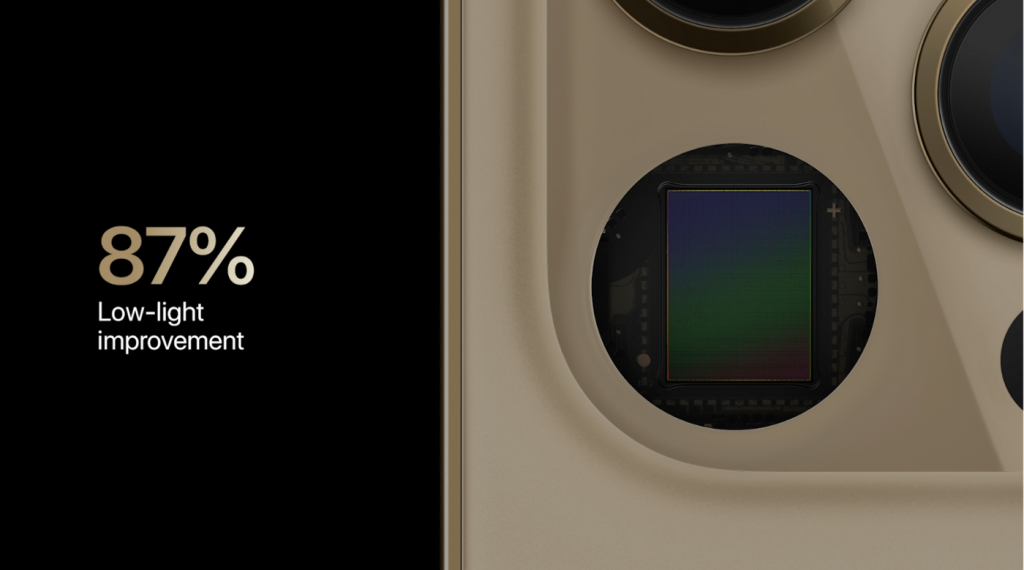

In addition to a better lens, the 12 Pro Max has the room to pack a new, 47% larger sensor. That means bigger pixels, and bigger pixels that capture more light simply means better photos. More detail in the day, more light at night. That combines with the lens to result in almost twice as much light captured: Apple claims an 87% improvement in light capture from the 11 Pro. That’s huge.

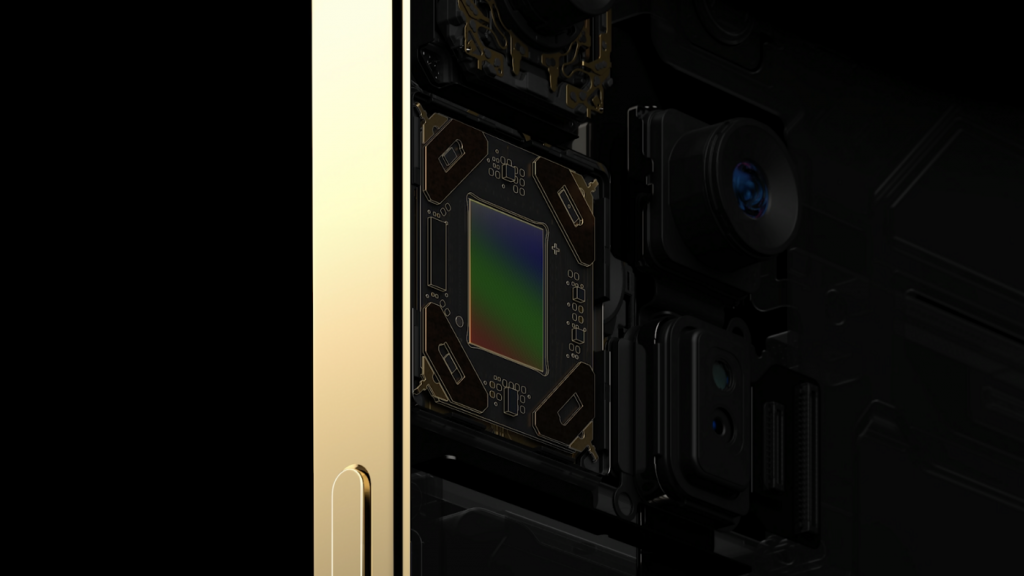

But that’s not its only trick: the 12 Pro Max’s Wide system also gets a new sensor-shift OIS system. OIS, or Optical Image Stabilization, lets your iPhone move the camera around a bit to compensate for your decidedly unsteady human trembly hands. That results in smoother video captures and sharp shots at night, when the iPhone has to take in light over a longer amount of time.

Again, only the 12 Pro Max gets this; it will allow it to do thousands of corrections per second; it will be interesting to see how much of a difference this makes against its smaller siblings.

Photos out of this camera system will be pretty fantastic. Conspicuously absent is a big megapixel boost. Android flagship phones have been touting absurd megapixel counts (like Samsung’s 108 megapixel camera); Apple is simply not participating in this race.

The megapixels across the board are unchanged. This huge new sensor? 12 megapixels. The ultrawide? 12 megapixels. The telephoto? You guessed it, 12. The selfie camera? 12 megapixels.

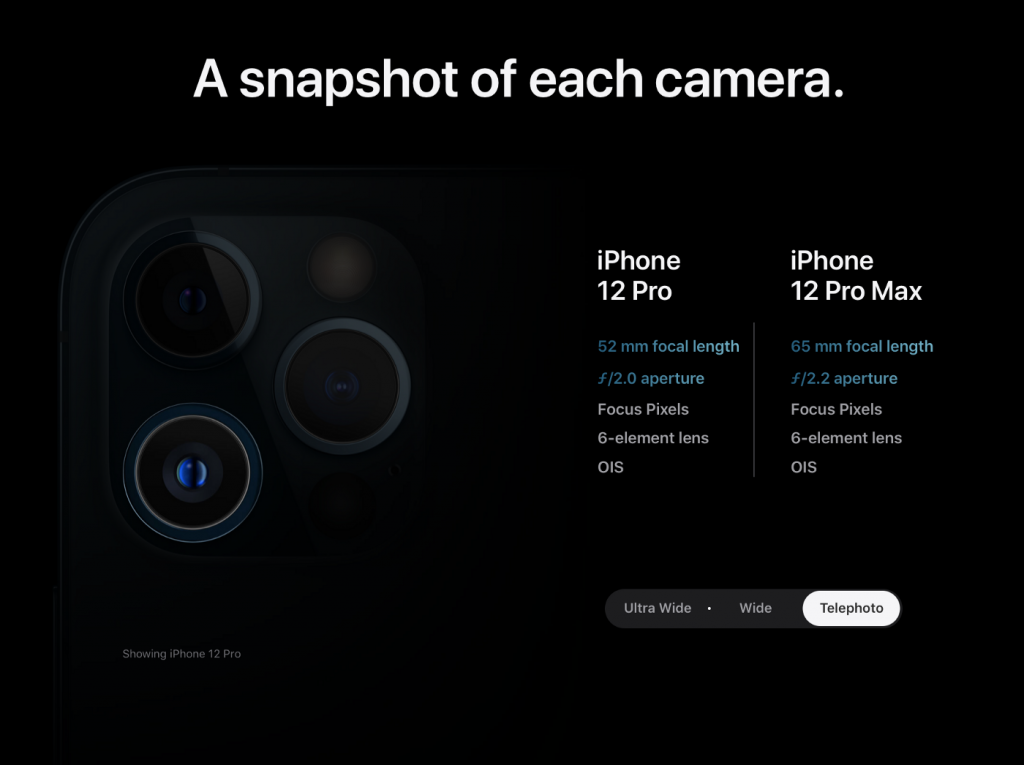

On the Pro Max, the telephoto camera also gets an upgrade. It can look a bit further now, to 65mm vs. its previous 52mm. Why only the Pro Max gets this is a bit of a mystery, but it will enable a nice, high zoom range (again, nothing crazy here like Huawei’s 100x zoom). The new 65mm telephoto lens does lose a little bit of light gathering capability as its lens is a bit slower at ƒ2.2 vs. the iPhone 11 Pro / 12 Pro’s ƒ2.0 52mm lens.

Finally, the 12 Pro and 12 Pro Max both gain something we’ve seen in the new iPad Pro: a LiDAR sensor. What’s a LiDAR sensor? We did a very deep dive, complete with a proof of concept app for mapping 3D space in this blog post:

LiDAR on the iPad Pro did more for AR and very little for photography. On the iPhone 12 Pro, it promises to vastly speed up autofocus and help with Portrait mode.

As it stands, Portait mode combines data from multiple cameras as well as using Machine Learning to add depth to a scene. More data is always better, so we’re excited to dive into it with Halide’s Depth Mode to see how high resolution the new combined depth sensing is.

Hardware Summary:

iPhone 11 to iPhone 12 : A better wide camera lens that collects more light, an unspecified but better ultrawide camera.

iPhone 11 Pro to iPhone 12 Pro: The same improvements as above, and the same telephoto camera we get in the iPhone 11 Pro. But: LIDAR!

iPhone 11 Pro to iPhone 12 Pro Max: Okay, now there’s no comparison. This is a much better Wide camera, with almost double the light collection, a new sensor, improved OIS and more. It has a new and greater zoom range on its telephoto lens. This thing is a beast. And it also gets LiDAR!

Software

As we said before, we really expect to see the greatest leaps in photographic improvement on phone cameras to come in software. Google has demonstrated this, with the first Night Mode that does seemingly impossible night shots on tiny phone cameras to various other computational tricks.

The key here is combining the very powerful processors in our phones with the ability to take many photos at once; something that small sensors can do better than the large and high-megapixel sensors of large professional mirrorless and SLR digital cameras. This is how our app Spectre (which Apple chose as 2019’s App of the Year) can do handheld 9-second long exposures. It’s all in the smarts.

We noticed that with the iPhone XS, Apple started to publicly shed light on, and market, its smart computational photography systems. Smart HDR was introduced then, which promised to combine many exposures at once in an instant when you press the shutter to produce images with better detail in shadows and highlights. It wasn’t perfect; some felt like it was aggressively smoothing over faces, for instance.

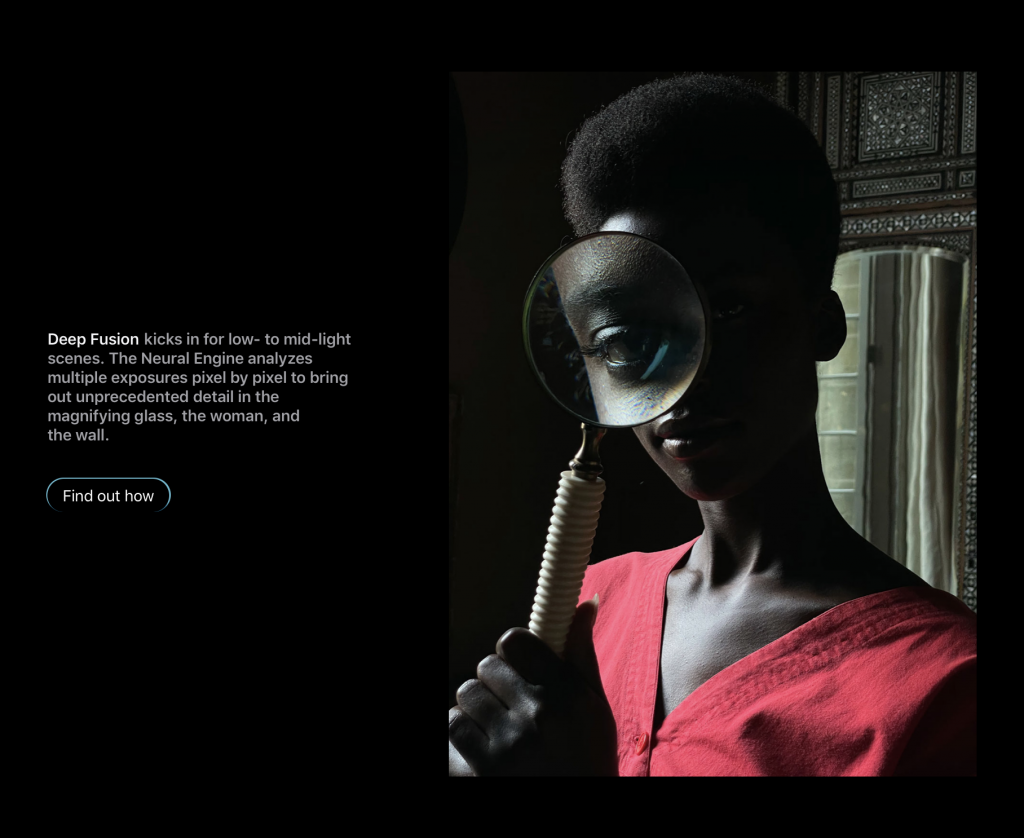

With Smart HDR 3 on iPhone 12 and 12 Pro, Apple segments images to prevent that kind of thing happening. This was already happening on iPhone 11, but this is a software challenge that only gets better with more processing power. Its other computational photography technique, Deep Fusion — a technology that kicks in in low and medium light — is promised to be improved as well.

Once you get more processing power, you can also start combining all these smart photography processes. We previously got Night Mode on iPhone 11 and 11 Pro.

Now it’s everywhere: Night mode is coming to the ultra-wide camera and selfie (TrueDepth) camera. What’s more, iPhone 12 and 12 Pro do just that smart combining of technologies: you can now get Night Mode to kick in on your Portrait photos.

This is big: a lot of crazy computational photography happening real-time. For this to work, the phone has to compare data from LIDAR and multiple cameras to infer depth, render real-time blur while also doing some crazy Night Mode enhancements. This is where that incredibly fast, Neural Engine and bigger ISP (image signal processor) toting A14 chip comes in handy.

Apple benefits from this tight integration of hardware and software: the new chip and better software enable better noise reduction, which means better video in low light (and photos, probably). Apple says the new ISP (Image Signal Processor) on the chip is to thank, but I bet some machine learning is involved. Apple claims 87% better low-light video on the iPhone 12 Pro Max, and states that on all phones, the new ISP allows better noise reduction for more detail. I bet some Machine Learning is used in this noise reduction, too.

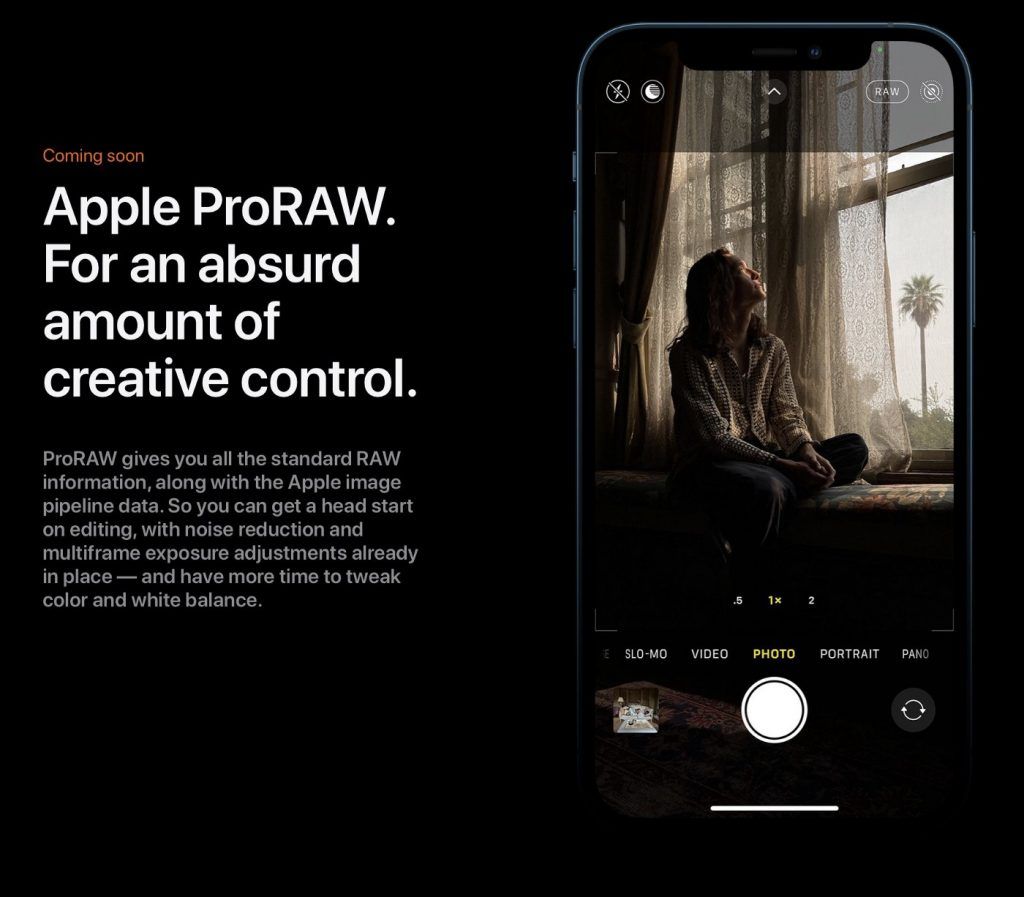

RAW comes to iPhone

This was scary to us for a moment: a lot of people buy Halide to shoot RAW on iPhone. And why not? The cameras on iPhones are incredibly impressive and can shoot shots like this in RAW:

Now, RAW gets stage time. Apple announced ProRAW. We had previously written about how RAW is both handicapped and benefits from not getting the ‘smart’ photography benefits of Smart HDR and Deep Fusion, which prompted us to release a Halide update with a feature called Smart RAW:

ProRAW, according to Apple, gives you the standard RAW along with this pipeline information, which should offer some fantastic flexibility when editing. Note that this might be a custom format; little is known, and it seems it was only announced and might be limited to the iPhone 12 Pro.

It’s also listed as ‘coming soon’:

More on that as we find out about it. Apple announced an API, so we’re eager to get our hands dirty on it.

Other improvements

There’s a small slew of other improvements: Machine learning improvements are said to have further improved Portrait mode. Night mode now comes to Timelapses, and video can now be captured in 10-bit HDR with Dolby Vision, a remarkable first. As we learn more about the soft- and hardware of these devices, including its Technical Readouts, we’ll post them here.

Feel free to send us any questions on Twitter!