When the iPhone was first introduced, it packed in quite a few innovations. At a now-legendary keynote, Steve Jobs announced that Apple was launching a widescreen iPod, a phone and an internet communications device. These three devices were not separate new products, he revealed — they were all functions of a revolutionary new device: the iPhone.

If introduced today, we’d find a conspicuous absence in that lineup: a groundbreaking new camera. It was not one of the devices he mentioned, and for good reason: The iPhone launched with a 2-megapixel, fixed-focus shooter. There was no pretense about this replacing your camera for taking photos.

Fast forward to today, and the main feature-point of most smartphone launches is, of course, their cameras. Plural. In the time since the iPhone launched, it has gone through small steps and big leaps and from a single fixed-focus camera to an entire complex array of cameras.

With iPhone 14 Pro, Apple has made a huge change to its entire camera system — and yet, it seems many reviewers and publications cannot agree on whether it is a small step or a huge leap.

At Lux, we make a camera app. We have been taking thousands of iPhone photos every month since we launched our app five years ago, and we know a thing or two about smartphone cameras. This is our deep dive into iPhone 14 Pro — not the phone, the iPod, or the internet communicator — but the camera.

Changes

We previously took a look at the technical readout of the iPhone 14 Pro, offering us a chance to look at the camera hardware changes. Our key takeaway was that there are indeed some major changes, even if just on paper. The rear camera bump is almost all-new: the iPhone 14 Pro gains larger sensors in its ultra-wide and main (wide) cameras, but Apple also promises leaps in image quality through improved software processing and special silicon.

However, what caught everyone’s eye first was the opener of Apple’s presentation of iPhone 14 Pro: a striking visual change. The iPhone’s recognizable screen cutout or ‘notch’ had all but disappeared, tucking its camera and sensor hardware into a small yet dynamic ‘island’. The user interface adapts around it; growing and shrinking with the screen cutout in an absolute feat of design. What’s more impressive to us, however, was miniaturizing the complex and large array of sensors and camera needed for Face ID.

Front-Facing

While the large cameras protruding ever-further from the rear of our iPhones capture our attention first — and will certainly get the most attention in this review, as well — the front-facing camera in the iPhone 14 Pro saw one of the biggest upgrades in recent memory, with its sensor, lens and software processing seeing a significant overhaul.

While the sensor size of the front-facing camera isn’t massive (nor do we believe it has to be), upgrades in its lens and sensor allowed for some significant improvements in dynamic range, sharpness and quality. The actual jump between a previous-generation iPhone’s front camera and this new shooter are significant enough for most people to notice immediately. In our testing, the iPhone 14 Pro achieved far sharper shots with vastly — and we mean vastly — superior dynamic range and detail.

The previous cameras were simply not capable of delivering very high-quality images or video in challenging mixed light or backlit subjects. We’re seeing some significant advancements made through better software processing (something Apple calls the Photonic Engine) and hardware.

While the sensor is larger and there is now variable focus (yes, you can use manual focus on the selfie camera with an app like Halide now!) you shouldn’t expect beautiful bokeh; the autofocus simply allows for much greater sharpness across the frame, with a slight background blur when your subject (no doubt a face) is close enough. Most of the time it’s subtle, and very nice.

Notable is that the front facing camera is able to focus quite close — which can result in some pleasing shallow depth of field between your close-up subject and the background:

Low light shots are far more usable, with less smudging apparent. Impressively, the TrueDepth sensor also retains incredibly precise depth data sensing, despite its much smaller package in the Dynamic Island cutout area.

This is one of the times where Apple glosses over a very significant technological leap that a hugely talented team no doubt worked hard on. The competing Android flagship phones have not followed Apple down the technological path of high-precision, infrared-based Face ID, as it requires large sensors that create screen cutouts.

The ‘notch’ had shrunk in the last generation of iPhones, but this far smaller array retains incredible depth sensing abilities that we haven’t seen another product even approximate. And while shipping a software feat — the dynamic island deserves all the hype it gets — Apple also shipped a significant camera upgrade that every user will notice in day to day usage.

Ultra-Wide

Time to break into that large camera bump on the rear of iPhone 14 Pro. The ultra-wide camera, introduced with iPhone 11 in 2019, has long played a background role to the main wide camera due to its smaller sensor and lack of sharpness.

Last year, Apple surprised us by giving the entire ultra-wide package a significant upgrade: a more complex lens design allowed for autofocus and extremely-close focus for macro shots, and a larger sensor collected more light and allowed for far more detailed shots.

We reviewed it as finally growing into its own: a ‘proper’ camera. While ultra-wide shots were impressively immersive, the iPhone 11 and 12 Pro’s ultra-wide shots were not sharp enough to capture important memories and moments. With iPhone 13 Pro, it was so thoroughly upgraded that it marked a big shift in quality. As a result, we were not expecting any significant changes to this camera in iPhone 14 Pro.

Color us surprised: with iPhone 14 Pro’s ultra-wide camera comes a much larger sensor, a new lens design and higher ISO sensitivity. While the aperture took a small step back, the larger sensor handily offsets this.

Ultra-wide lenses are notorious at being less than sharp, as they have to collect an incredible amount of image at dramatic angles. Lenses—being made of a transparent material—will refract light at an angle, causing the colors to separate. The wider the field of view of the lens, the more challenging it is to create a sharp image as a result.

The iPhone’s ultra-wide camera has a very wide lens. ‘Ultra-wide’ has always lived up to its name: with a 13mm full-frame equivalent focal length it almost approximates the human binocular field of view. GoPro and other action cameras have a similar field of view, allowing a very wide angle for capturing immersive video and shots in tight spaces. These cameras have not traditionally been renowned for optical quality, however, and we’ve not seen Apple challenge that norm. Is this year’s camera different?

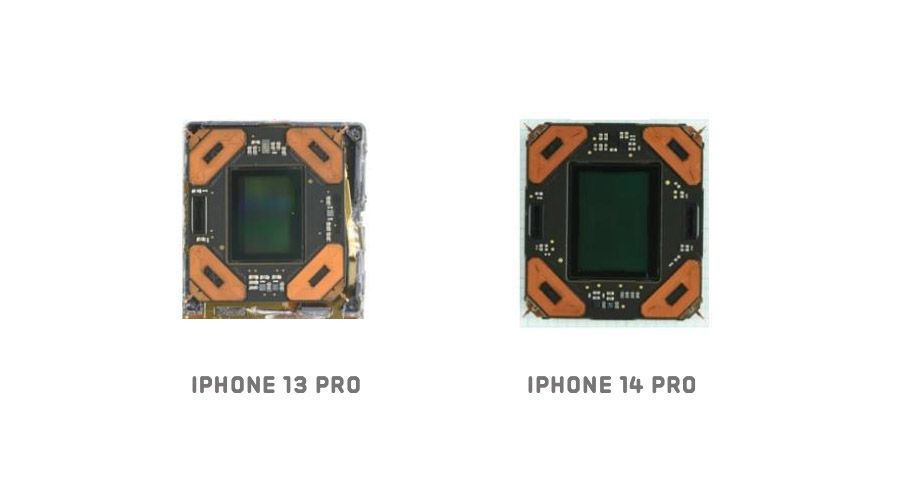

Apple did not go into great detail about the camera hardware changes here, but thankfully Techinsights took the entire camera package apart. The new sensor, at 40mm², is almost 50% larger than the iPhone 13 Pro’s 26.9 mm² sensor. While its aperture is slightly ‘slower’ (that is, smaller) the larger sensor compensates.

How does the sensor and lens change stack up in practice? The iPhone 14 Pro easily outperforms the previous iPhone, creating far sharper shots:

In multiple shots in various conditions, the shots were far more detailed and exhibited less visible processing than comparable iPhone 13 Pro shots:

A nice side benefit of a larger sensor is a bit more shallow depth of field. At a 13mm full-frame equivalent, you really can’t expect too much subject separation, but this shot shows some nice blurry figures in the background.

I believe that both Apple’s new Photonic Engine and the larger sensor are contributing to a lot more detail in the frame, which makes a cropped image like the above shot possible. The ‘my point of view’ perspective that the ultra-wide provides puts you in the action, and a lack of detail makes every shot less immersive.

Is it all perfect? Well, comparably, with the main camera getting ever-better, it is still fairly soft and lacking detail. Its 12 megapixel resolution is suddenly feeling almost restrictive. Corners are still highly distorted and soft at times, despite excellent automatic processing from the system to prevent it from looking too fish-eye like.

Low Light

One thing that we really wanted to test was the claims of low-light performance. Across the board, Apple is using larger sensors in the iPhone 14 Pro, but it also claims that its new Photonic Engine processing can offer an ‘over 2× greater’ performance in low light.

Looking at an ultra-wide image in low light, we are seeing… some improvements:

I shot various images in all sorts of lighting, from mixed lighting in daylight to evening and nighttime shots and found that the ultra-wide camera still did not perform very amazing in low light. The shots are good, certainly: but the image processing is strong and apparent, getting sharp shots can be tricky, and noise reduction is very visible.

With Night Mode enabled, processing looked equally strong between iPhone 13 Pro and iPhone 14 Pro.

Compared to the iPhone 13 Pro, we’re struggling to see a 2 or 3 time improvement.

I’d find it challenging either way to quantify an improvement as simply as ‘2×’ of a camera’s previous performance. In terms of hardware, one can easily say a camera gathers twice as much light, but software processing is much more subjective. Perhaps to you, this is twice as good. For others, maybe not.

I’ll say, however, that it’s understandable: this is a tiny sensor, behind a tiny lens, trying its best in the dark. I wouldn’t expect fantastic low light shots. Since the ultra-wide camera does not gain a lens or sensor that is twice as fast or large, we can’t judge this as being a huge leap. It’s a solid improvement in daylight, however.

Overall I consider this camera a solid upgrade. A few iPhone generations ago, this would have been a fantastic main camera, even when cropped down to the field of view of the regular wide camera. It is a nice step up in quality.

A word about macro.

iPhone 13 Pro had a surprise up its sleeve last year, with a macro-capable ultra-wide camera. Focusing on something extremely close will stress a lens and sensor to its fullest, which is a great measure of how sharp it can capture images. iPhone 13 Pro’s macro shots we took were often impressive for a phone, but quite soft. Fine detail was not preserved well, especially when using Halide’s Neural Macro feature which further magnified beyond the built-in macro zoom level.

Macro-heavy photographers will rejoice in seeing far more detail in their macro shots. This is where the larger sensor and better processing makes its biggest leaps; it is a very big upgrade for those that like the tiny things.

Main

Since 2015, the iPhone has had a 12 megapixel main (or ‘wide’) camera. Well ahead of Apple’s event last month, there had been rumors that this long era — 7 years is a practical century in technology time — was about to come to an end.

Indeed, iPhone 14 Pro was announced with a 48 megapixel camera. Looking purely at the specs, it’s an impressive upgrade: the sensor is significantly larger in size, not just resolution. The lens is slightly slower (that is, its aperture isn’t quite as large as the previous years’) but once again, the overall light-gathering ability of the iPhone’s main shooter improves by as much as 33%.

The implications are clear: more pixels for more resolution; more light gathered for better low light shots, and finally, a bigger sensor for improvements in all areas including more shallow depth of field.

There’s a reason we chase the dragon of larger sensors in cameras; they allow for all our favorite photography subjects: details, low light and night shots, and nice bokeh.

Quad Bayer

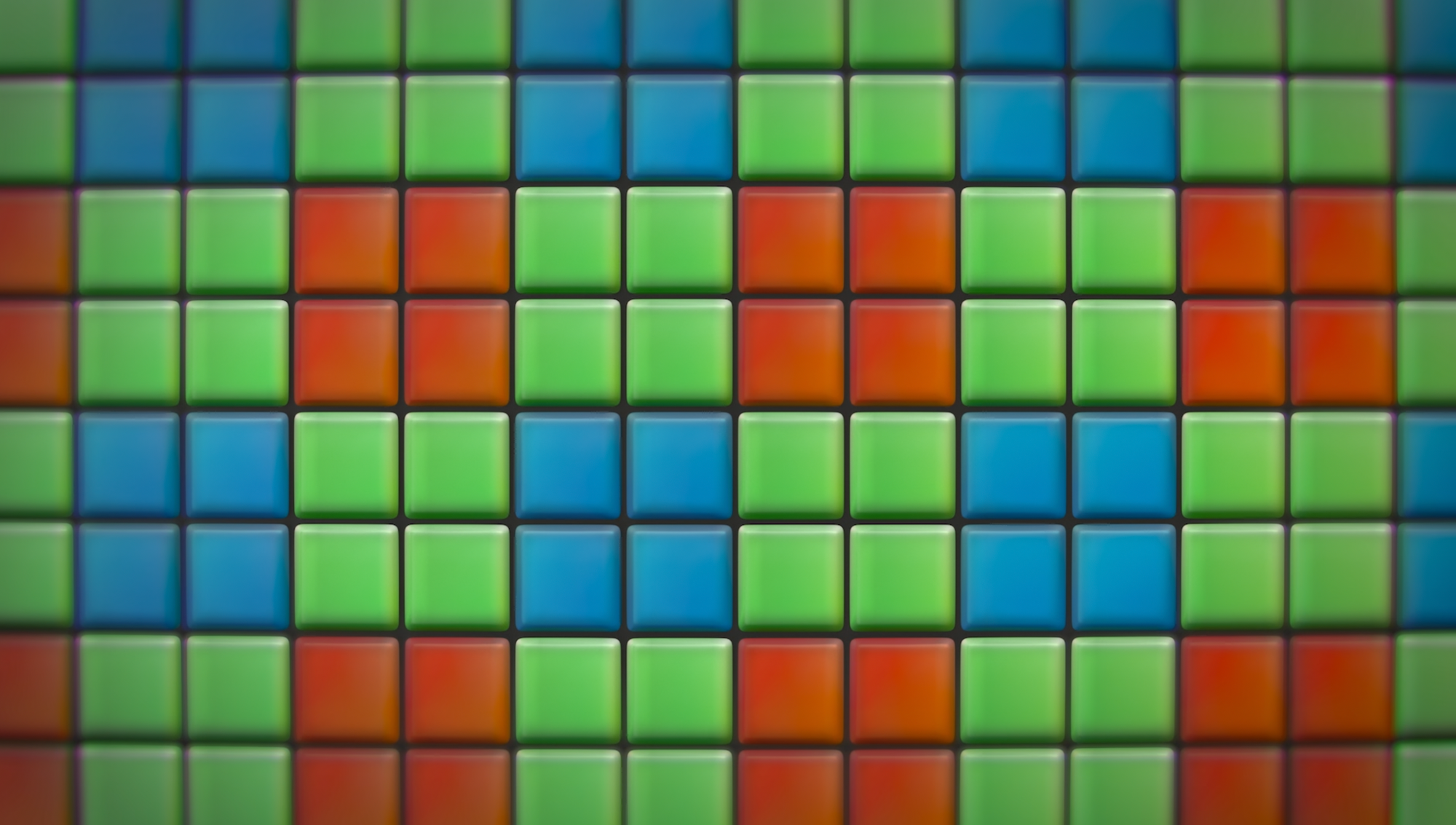

Your average digital camera sensor has a particularly interesting way to capture color. We’ve previously detailed this in our post about ProRAW, which you can read here, but we’ll quickly go over it again.

Essentially, a camera sensor contains tiny pixels that can detect how much light comes in. For a dark area of an image, it will register darkness accurately, and vice versa for lighter areas. The trick comes in sensing color; to get that in a shot, sensors have colored red, blue and green lenses on these tiny pixels.

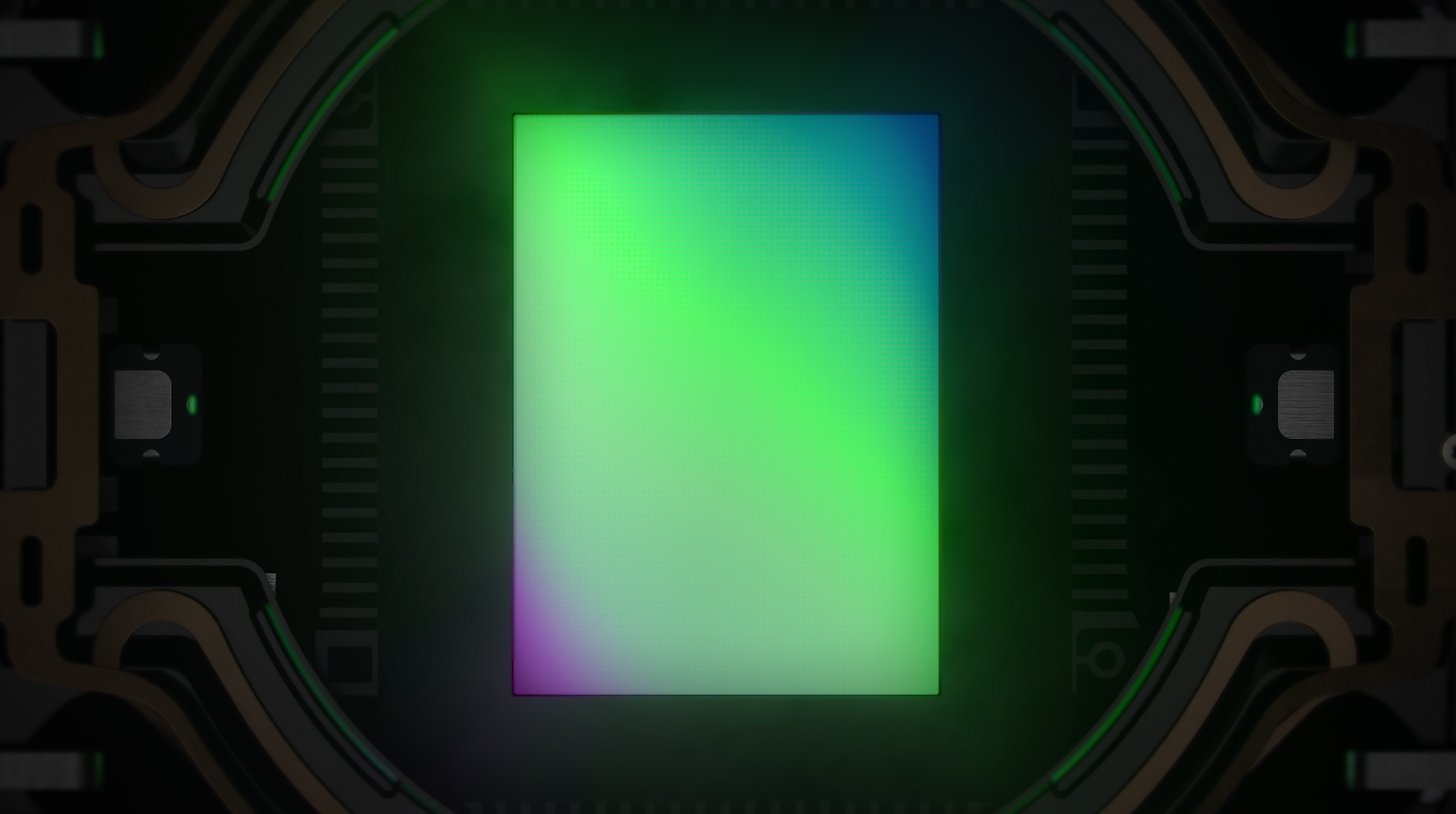

Every group of this mosaic of ‘sub-pixels’ can be combined with smart algorithms to form color information, yielding a color image. All but one of the iPhone 14 Pro’s sensors look like this:

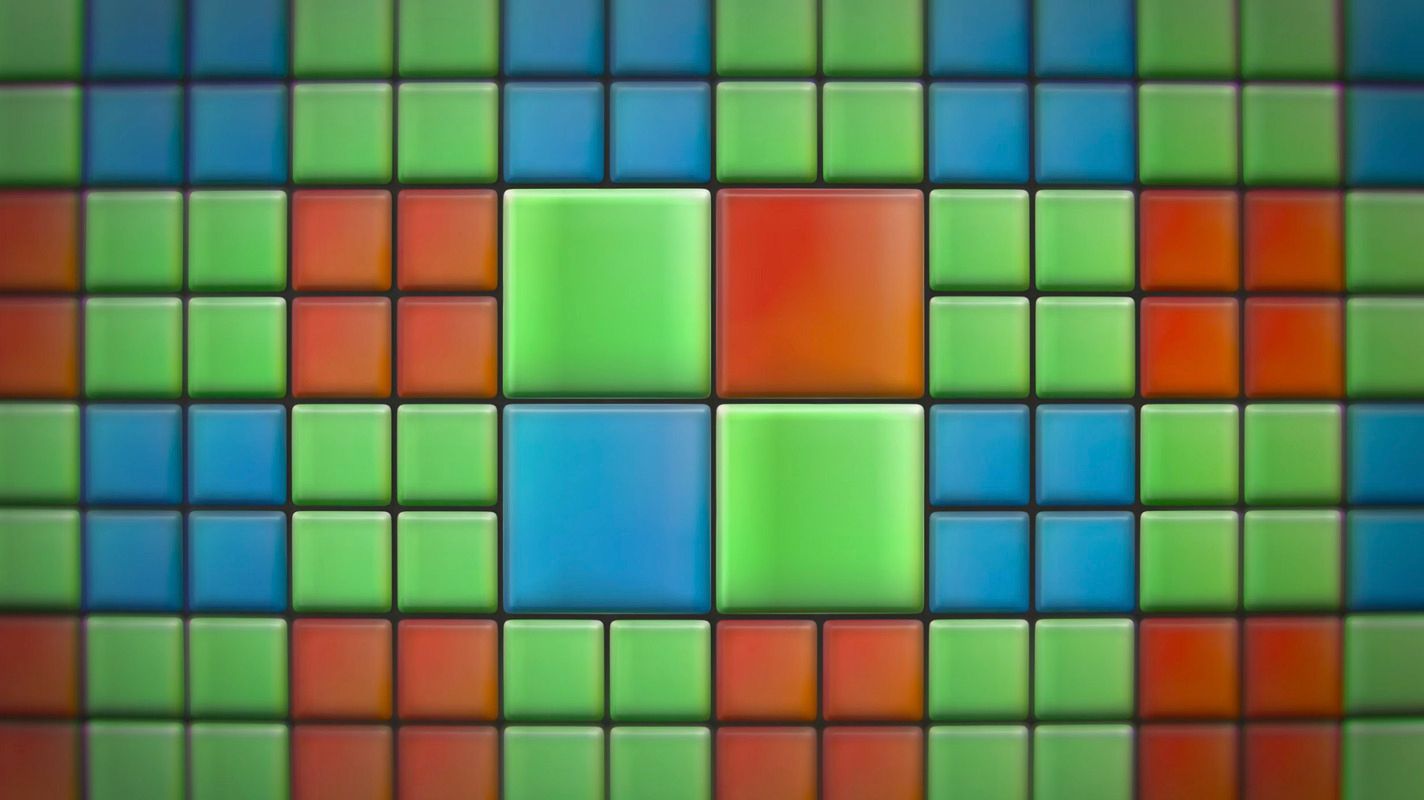

Every ‘pixel’ essentially being composed of one blue, one red and two green subpixels. The iPhone 14 Pro’s main camera, however, puts four times as many pixels in that same space:

You might be wondering why, exactly, it would not squeeze in four times as many pixels in an equally ‘mosaicked’ pattern, instead opting for this four-by-four pattern known as ‘Quad Bayer’.

While we can dedicate an entire post to the details of camera sensors, allow me to briefly explain the benefits.

Apple has a few main desires when it comes to its camera. Resolution was not the only reason to move to a more advanced, larger and higher pixel-count sensor. Apple desires speed, low noise, light gathering ability, dynamic range (that is, detail in both shadow and light areas) and many more things from this sensor.

As we mentioned before, algorithms are required to ‘de-mosaic’ this color information. In terms of sheer speed, a Quad Bayer sensor can quickly combine these four ‘super subpixels’ of single color into one, creating a regular 12 megapixel image. This is critical for say, video, which needs to read out lower resolution images at a very high rate from the camera. This also lowers noise; sampling the same part of the image with four different pixels and combining the result is a highly effective way to reduce any random noise in the signal.

But the benefits don’t end there: Quad Bayer sensors are capable of using different sensitivities for dark and light values of the color they are sensing to achieve better detail in shadows and highlights. This is also known as ‘dynamic range’.

Imagine if you are taking a shot of your friend in a dark tent with a bright sky behind them. Any camera would struggle to capture both the dark shadows of your friend and the bright sky beyond — a problem most modern phone cameras struggle with. Today, your phone will try to solve this by taking several images that are darker and brighter and merging the result in a process known as ‘HDR’ (High Dynamic Range).

The quad-bayer sensor allows the camera to get a head start on this process by capturing every pixel at different levels of brightness to allow for an instant HDR capture. This cuts down on processing needed in images to correct for image merging artifacts, like moving subjects, which has always been tricky in modern smartphone photography processing that requires combining several photos to improve image quality.

We do have to end this short dive into the magical benefits of the quad bayer sensor with a small caveat: the actual resolution of a quad-bayer sensor is not as high as that of a ‘proper’ 48 megapixel sensor with a regular (or ‘bayer’) mosaic arrangement when shot at 48 megapixels. On paper, its greatest benefits come from merging its 48-megapixel-four-up mosaic into 12 megapixel shots.

Thus, I was really expecting to see nice, but overall unimpressive 48 megapixel shots — something that seemed additionally likely on iPhone 14 Pro, as Apple chose to keep the camera at 12 megapixels almost all the time, only enabling 48 megapixel capture when users go into Settings to enable ProRAW capture.

But let’s say you enable that little toggle…

48 Delicious Megapixels

I shoot a lot of iPhone photos. In the last five years, I’ve taken a bit over 120,000 photos — averaging at least 10,000 RAW shots per iPhone model. I like to take some time — a few weeks, at least — to review a new iPhone camera and go beyond a first impression.

I took the iPhone 14 Pro on a trip around San Francisco and Northern California, to the remote Himalayas and mountains of the Kingdom of Bhutan, and Tokyo — to test every aspect of its image-making, and I have to say that I was pretty blown away by the results of the main camera.

While arguably, a quad-bayer sensor should not give true 48-megapixel sensor resolution as one might get from, say, a comparable ‘proper’ digital camera, the results out of the iPhone 14 Pro gave me chills. I have simply never gotten image quality like this out of a phone. There’s more here than just resolution; the way the new 48 megapixel sensor renders the image is unique and simply tremendously different than what I’ve seen before.

These photos were all shot in Apple’s ProRAW format and further developed in Lightroom or other image editors like Apple’s own Photos app. I was very impressed with the latitude of these files; I tend to expose my image for the highlights, shooting intentionally dark to boost shadows later, and these files have plenty of shadow information to do just that:

Detail-wise, we can see quite easily that it’s an incredible leap on previous iPhones, including the iPhone 13 Pro:

It helps that Bhutan is essentially a camera test chart, with near-infinite levels of detail in their decorations to pixel-peep:

Comparing to the iPhone 13 Pro, the comparison seems downright unfair. Older shots simply don’t hold a candle to it. The shooting experience is drastically different: larger files and longer capture times do hurt the iPhone 14 Pro slightly — from a 4-second delay between captures in proper ProRAW 48 megapixel mode to the occasional multi-minute import to copy over the large files — but the results are incredible.

This is an entirely subjective opinion, but there’s something in these images. There’s a difference in rendering here that just captures light in a new way. I haven’t seen this in a phone before. I am not sure if I can really quantify it, or even describe it. It’s just… different.

Take this photo, which I quickly snapped in a moment on a high Himalayan mountain pass. While a comparable image can be seen in the overview of ultra-wide shots, the rendering and feel of this shot is entirely different. It has a rendering to it that is vastly different than the iPhone cameras that came before it.

Is this unique to those 48 megapixel shots? Perhaps: I did not find a tremendous boost in noise or image quality when shooting in 12 MP. I barely ever bothered to drop down to 12 MP, instead coping with the several-second delay when capturing ProRAW 48 MP images. But above all, I found a soul in the images from this new, 48-megapixel RAW mode that just made me elated. This is huge — and that’s not just the file size I am talking about. This camera can make beautiful photos, period, full stop. Photos that aren’t good for an iPhone. Photos that are great.

And yes: 48 megapixel capture is slow. We’re talking up to 4 seconds of capture time slow. While slow, the 48 MP image rendering and quality is worth the speed tradeoff to me. For now, it is also worth noting that iPhone 14 Pro will capture 10-bit ProRAW files only — even third party apps cannot unlock the previous 12-bit ProRAW capture mode, nor is ‘regular’ native RAW available at that resolution.

Halide and other apps can, however, shoot 48 MP direct-to-JPG, which is significantly faster and gives you fantastically detailed shots.

I understand Apple’s choice to omit a ‘high resolution’ mode as it would simply complicate the camera app needlessly. Users wouldn’t understand why key components of the iPhone camera like Live Photos or other things stopped working, why their iCloud storage would suddenly be full with four-times-larger files, and see little benefit unless zooming deeply into their shots.

But then… without that 48 megapixel resolution, I would’ve thought this butterfly in the middle of the frame would’ve been a spec of dust on my lens. And I wouldn’t get this wonderful, entirely different look. This isn’t just about the pixels but about what’s in the pixels — a whole image inside your image.

I think the 12 MP shooting default is a wise choice on Apple’s part, but it does mean that the giant leap in image quality on iPhone 14 Pro remains mostly hidden unless you choose to use a third party app to shoot 48 MP JPG / HEIC images or shoot in ProRAW and edit your photos later. Perhaps this is the first iPhone that truly puts the ‘Pro’ in ‘iPhone Pro’. If you know, you know.

Natural Bokeh

A larger sensor means better bokeh. It’s simply a fact. The reason for this is that a larger image field can allow for a more pronounced depth of field effect. In my testing, I found that the iPhone 14 Pro produces pleasing, excellent natural bokeh on close-focus subjects:

Unfortunately, you will see this nice natural background blur in fewer situations. The minimum focus distance of the main camera has changed to 20 cm or a little under eight inches — a few centimeter less than the iPhone 13 Pro and any preceding iPhone. In practice, this also means you see the camera switching to its ultra-wide, macro-capable camera much more often. More on that later.

24mm vs 26mm

If you’re an absolute nerd like me, you will notice that your main camera is capturing a wider image. That’s no accident: In re-engineering the lens and sensor package for this new camera, Apple decided to go with a 24mm (full frame equivalent) focal length rather than the old 26mm. It’s impossible to objectively judge this.

Myself? I’d rather have a tighter crop. I found myself often cropping my shots to capture what I wanted. It could be habit — I have certainly shot enough 26mm-equivalent shots on an iPhone — but I’d honestly love it if it was tighter rather than wider. Perhaps closer to a 35mm equivalent.

Regardless of your own feelings, to offset this wider image, Apple did add a whole new 48mm lens. Or did they?

On The Virtual 2× Lens

I will only add a fairly minor footnote about this in-between lens, as we will be dedicating a future blog post about this interesting new option on iPhone 14 Pro.

Since the main camera now has megapixels to spare, it can essentially crop your shot down to the center of your image, resulting in a 12 megapixel, 48mm-equivalent (or 2×) shot. Apple has included this crop-of-the-main-sensor ‘lens’ as a regular 2× lens, and I think it’s kind of brilliant. The Verge wrote an entire love letter on this virtual lens, touting it as better than expected.

I agree. It’s a great option, and it felt sorely needed. For most users, 3× is a fairly extreme zoom level. The 2× 48mm field of view is an excellent choice for portraits, and a smart default for the native camera’s Portrait mode. Overall, I found cropping my 48MP RAW files by hand better than taking 2× shots in practice. For everyday snapshots, however, it’s practically indispensable. This feels like laying the groundwork for a far longer, more extreme telephoto zoom in the iPhone future.

A note on LIDAR

While Apple did not mention or advertise it, the LIDAR sensor on iPhone 14 Pro has been slightly altered. It matches the new, wider 24mm focal length of the main camera in its dot coverage. Depth sensing seems identical in quality and speed compared to previous iPhones.

Frustratingly, this LIDAR sensor does not seem to improve its previous behaviors. While I am sure it aids autofocus speed and depth capture, it is still an exercise in frustration to attempt a shot through a window with the camera.

As the LIDAR sees the glass pane as opaque — whether it is a room window, a glass pane, an airplane window, etc — it will focus the camera on it, rather than the background. I end up using manual focus in Halide, as there is no toggle to turn off LIDAR-assisted focus. I hope a future A17 chip has ML-assisted window detection.

Low Light

As I mentioned in our ultra-wide overview, I was somewhat skeptical of the claims of leaps in low-light performance. I still prefer to shoot little at night and in low light with phones, as night mode is a nice trick but still results in overly-processed images to my personal taste.

The low-light gains on the main camera are very noticeable, however. It’s particularly good in twilight-like conditions, before Night mode would kick in. I found the detail remarkable at times.

There’s certainly great improvements being made here, and I can see that Apple has done a lot to improve processing of low-light shots to be less aggressive. It pays off to stick to 48 megapixels here, where noise reduction and other processing artifacts are simply less pronounced:

I found the camera to produce very natural and beautiful ProRAW shots in situations where I previously almost instinctively avoided shooting. There’s some mix happening here of the camera picking the sharpest frame automatically, processing RAW data with the new Photonic Engine and having a larger sensor that is making some real magic happen.

Finally, if you have the time and patience, you can go into nature and do some truly stunning captures of the night sky with Night mode.

While true astrophotography remains the domain of larger cameras with modified sensors, it is utterly insane that I did this by propping my iPhone against a rock for 30 seconds:

This is gorgeous. I love that I can do this on a phone — it kind of blows my mind. If you’d shown me this image a mere five years ago, I wouldn’t have believed it was an iPhone photo.

The flip side of this is that we have now had Night Mode on iPhone for 4 years, and it still sorely lacks advanced settings — or better yet, an API.

For an ostensible ‘pro camera’, the interface for Night Mode has to walk the fine line of being useable for novices as well as professional photographers. We’d love to add Night Mode to Halide, along with fine grained controls. Please, Apple: allow apps to use Night Mode to capture images and adjust its capture parameters.

Telephoto

Last but very much not least: the telephoto camera. While I’ve said above that for most users, the 3× zoom factor (about 77mm for a full-frame camera equivalent lens focal length) is a bit extreme, it is by far my favorite camera on the iPhone.

If I go out and shoot on my big cameras, I rock a 35mm and 75mm lens. 75mm is a beautiful focal length, forcing you to focus on little bits of visual poetry in the world around you. It is actually fun to find a good frame, as you have to truly choose what you want in your shot.

My grave disappointment with the introducing of the 3× lens last year was that it seemed coupled with an older, underpowered, small sensor. Small sensors and long lenses do not pair well: as a long lens gathers less light, the small sensor dooms it to be a noisy camera by nature. Noisy cameras on iPhones will make smudgy shots, simply because they receive a lot of noise reduction to produce a ‘usable’ image.

Therefore, I was a bit disappointed in seeing no announcement of a sensor size or lens upgrade on iPhone 14 Pro’s telephoto camera. This part of the system, which so badly craved a bump in light collection ability, seemed like it had been skipped over another generation.

Color my extreme surprise using it and finding it dramatically improved — possibly as much as the ultra-wide in practical, everyday use.

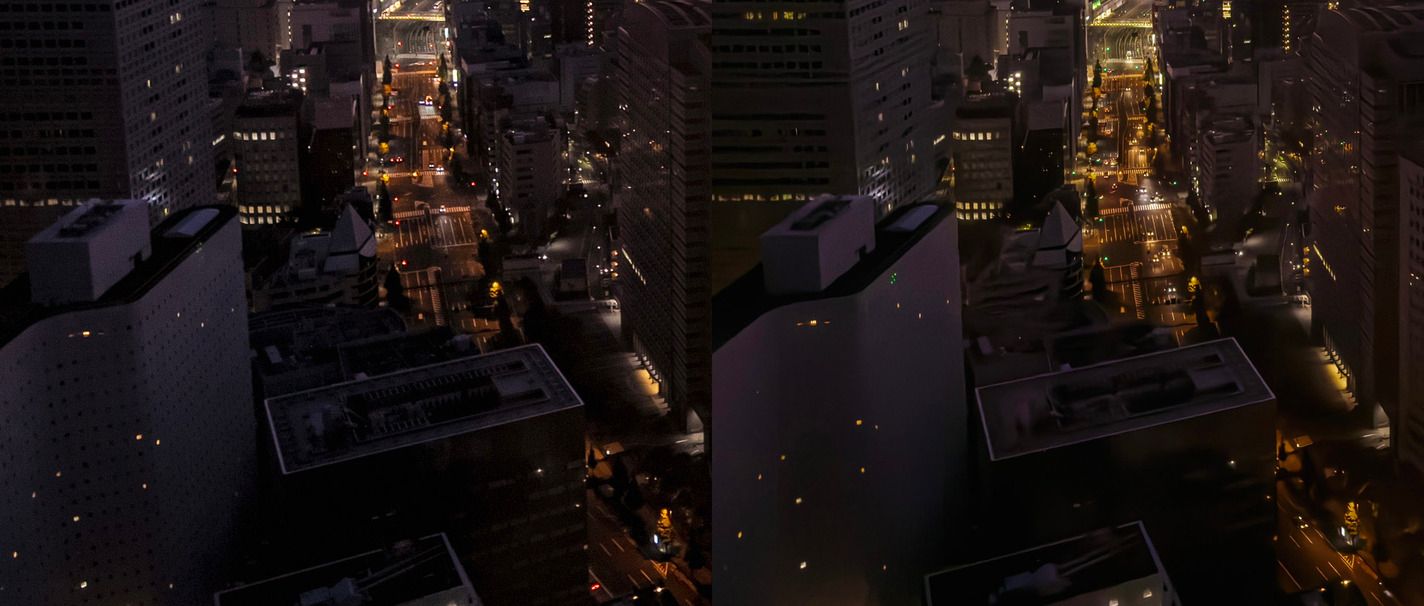

Compare this shot from the iPhone 13 Pro to the exact same conditions on iPhone 14 Pro:

While these two cameras ostensibly pack the same size sensor and exact same lens, the processing and image quality on the iPhone 14 Pro is simply leagues ahead. Detail and color are far superior. On paper, Apple has the exact same camera in these two phones — which means a lot of praise here has to go to their new Photonic Engine processing that seems to do a much nicer job at processing the images out of the telephoto camera and retaining detail.

At times, it truly blows you away:

Low light is improved, with much better detail retention and seemingly a better ability to keep the image sharp, despite its long focal length and lack of light at night.

To have a long lens on hand is one of the Pro-line of iPhone’s selling points, and if you value photography, you should appreciate it appropriately.

There’s certainly still times where it sadly misses, but overall, this is an incredible upgrade — seemingly entirely out of left field. Apple could’ve certainly advertised this more. I wouldn’t call this a small step. It has gone from a checkmark on a spec sheet to a genuinely impressive camera. Well done, Apple.

The Camera System

While this review has looked at individual cameras, iPhones have a different way of treating its camera array. The Camera app unifies the camera system as one ‘camera’: abstracting away the lens switching and particularities of every lens and sensor combination to work as one fluid simple camera. This little miracle of engineering takes a lot of work behind the scenes; cameras are precisely matched on micron-precision to each other at the manufacturing stage, and lots of work is done to ensure white balance and rendering consistency between them all.

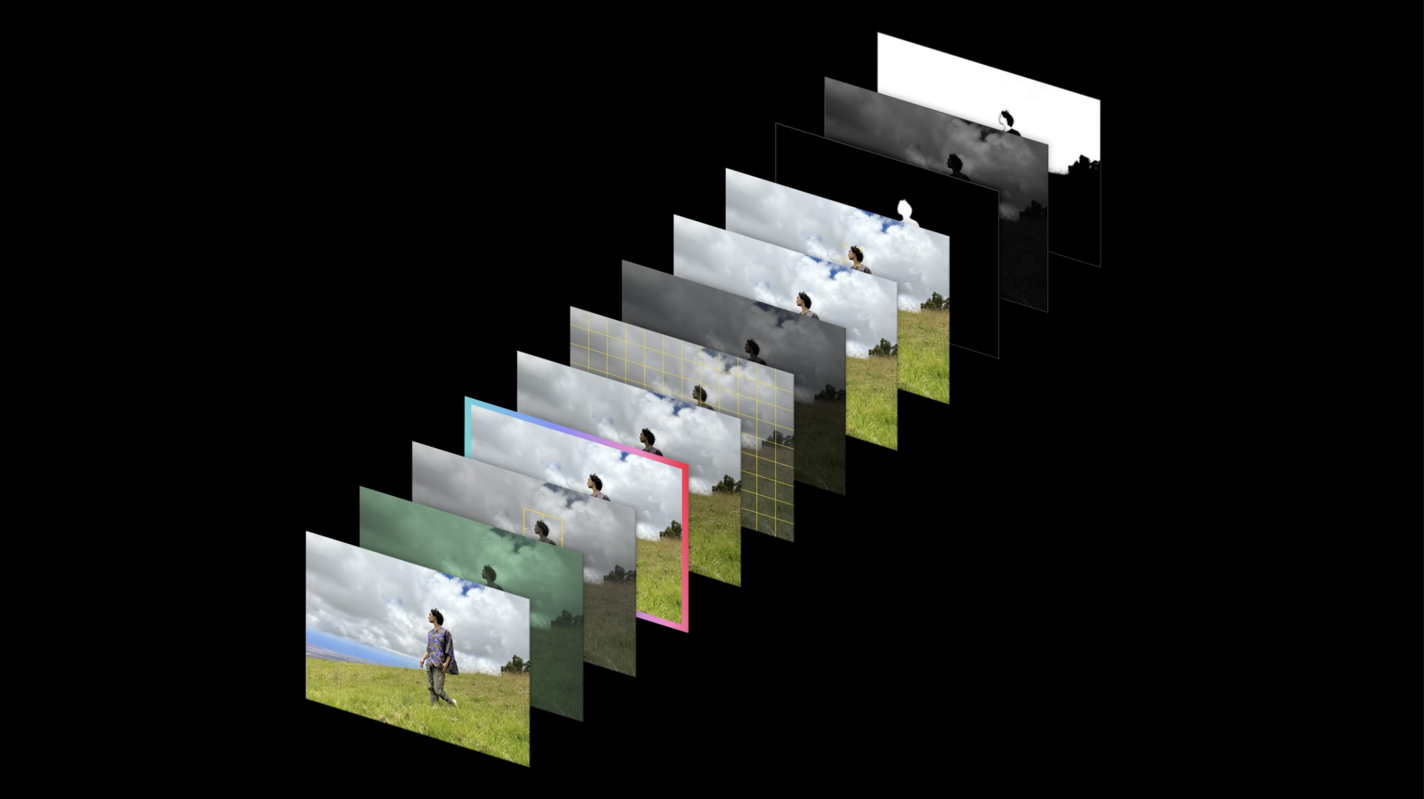

The biggest factor determining image quality for this unified camera for the vast majority of users is no longer the sensor size or lens, but the magic Apple pulls off behind the scenes to both unify these components and get higher quality images out of the data they produce. Previously, Apple had several terms for the computational photography they employed: Smart HDR and Deep Fusion were two of the processes they advertised. These processes, enabled by powerful chips, capture many images and rapidly merge them to achieve higher dynamic range (that is, more detail in the shadows and highlights), more accurate color, extract more texture and detail while also using artificial intelligence to separate out various areas of the image to apply enhancements and noise reduction.

The result of all this is that any image from an iPhone comes out already highly edited compared to the RAW data that the camera actually produces.

Processing

If a camera’s lens or sensor is an aspect worth reviewing of a camera, then so is a modern phone camera’s processing. Beginning years ago, iPhones have started to merge many photos into one for improving dynamic range, detail and more — and this processing is different with every iPhone.

Starting on iPhone 13 Pro, we’ve started to really see mainstream complaints about iPhone camera processing. That is, people were not complaining about the camera taking blurry images at night because of a lack of light, or missing autofocus, but seeing images with odd ‘creative decisions’ taken by the camera.

Ever since our look at iPhone 8, we noted a ‘watercolor effect’ that rears its head in images when noise reduction is being applied. At times, this was mitigated by shooting in RAW (not ProRAW), but as the photography pipeline on iPhones has gotten increasingly complex, pure RAW images have deteriorated.

iPhone 13 Pro would rank up with the iPhone XS in our iPhone camera lineup as the two iPhones that have the heaviest, most noticeable ‘processed look’ on their shots. Whether this is because these cameras tend to take more photos at higher levels of noise to achieve their HDR and detail enhancement or other reasons, we cannot be certain of, but they have images that simply come out looking more heavily ‘adjusted’ than any other iPhone:

The two selfies shot above on iPhone 13 Pro demonstrate an odd phenomenon: the first shot is simply a dark, noisy selfie taken in the dark. Nobody is expecting a great shot here. The second sees bizarre processing artifacts where image processing tried to salvage the dark shot, resulting in an absurd watercolor-like mess.

With this becoming such a critical component of the image, we’ve decided to make it a separate component to review in our article.

For those hoping that iPhone 14 Pro would do away with heavily processed shots, we have some rather bad news: it seems iPhone 14 Pro is, if anything, even more hands-on when it comes to taking creative decisions around selective edits based on subject matter, noise reduction and more.

This is not necessarily a bad thing.

You see, we liken poor ‘smart processing’ to the famous Clippy assistant from Microsoft Office’s early days. Clippy was a (now) comical disaster: in trying to be smart, it was overly invasive in its suggestions. It would make erroneous assumptions, interpreting both the wrong inputs and outputting bad corrections. If your camera does this, you will get images that are unlike what you saw and what you wanted to capture.

Good processing, on the other hand, is magic. Examples include night mode, which is the heaviest processing of images that your iPhone can do to achieve what you can easily see with the naked eye: a dimly lit scene. Tiny cameras with small lenses simply do not capture enough light to capture this, and ‘stitching’ together many shots taken in quick succession, stabilizing them in 3D space, and even using machine learning to guess their color allows for a magical experience.

iPhone 14 Pro vastly improves on iPhone 13 Pro in this department by having a far higher success rate in its processing decisions.

There’s still times where iPhone 14 Pro misses, but at a much lower rate than the iPhone 13 Pro and preceding iPhones. If you’re like me, and prefer to skip most of this processing, there’s some good news.

48MP Processing

Of note is that the 48 megapixel images coming out of the iPhone 14 Pro exhibit considerably less ‘heavy handed’ processing than the 12 megapixel shots. There’s a number of reasons this could be happening: one is that by virtue of it being four times the data to process, the system cannot possibly achieve as much processing as a lower resolution shot.

The other likely reason you notice less processing is that it is a lot harder to spot the sharper edges and smudged small details on a higher resolution image. In our iPhone 12 Pro Max review, I noted that we are running to the limitations of the processing’s resolution; a higher resolution source image indeed seems to result in a more natural look. But one particular processing step being skipped is much more evident.

It appears 48 MP captures bypass one of my personal least favorite ‘adjustments’ that the iPhone camera now automatically applies to images taken with people in it. Starting with iPhone 13 Pro, I noticed occasional backlit or otherwise darkened subjects being ‘re-exposed’ with what I can only call a fairly crude automatic lightness adjustment. Others have noticed this, too — even on iPhone 14 Pro:

This is a ‘clever’ step in iPhone photography processing: since it can super-rapidly segment the image in components, like human subjects, it can apply selective adjustments. What matters is the degree of adjustment and its quality. I was a bit disappointed to find that this adjustment seems to be equally heavy-handed as the previous iPhone: I have honestly never seen it make for a better photo. The result is simply jarring.

A second, but rare artifact I have seen on the 48 megapixel ProRAW files (and to a less extent, 48 megapixel JPG or HEIC files shot with Halide) has been what seems like processing run entirely amok. At times, it might simply remove entire areas of detail in a shot, leaving outlines and some details perfectly intact.

Compare these shots, the first taken on iPhone 13 Pro and the second on an iPhone 14 Pro:

The second shot is far superior. Neglecting even the resolution, the detail retained in the beautiful sky gradient, the streets and surroundings is plainly visible. There’s no comparison: the iPhone 14 Pro shot looks like I took it on a big camera.

Yet… something is amiss. If I isolate just the Shinjuku Washington Hotel and KDDI Building in the bottom left of the image, you can see what I mean:

You can spot stunning detail in the street on the right image — utterly superior rendering until the road’s left side, at which point entire areas of the building go missing. The windows on the Washington Hotel are simply gone on the iPhone 14 Pro, despite the outlines of the hotel remaining perfectly sharp.

I haven’t been able to reproduce this issue reliably, but occasionally this bug slips into a capture and shows that processing can sometimes take on a life of its own — assuming the brush and drawing its own reality. Given that this is the first 48-megapixel camera from Apple, some bugs are to be expected, and it’s possible that we’ll see a fix for this in a future update.

Lens Switching

When we released Halide for the iPhone X, some users sent in a bug report. There was a clear problem: our app couldn’t focus nearly as close as Apple’s camera app could. The telephoto lens would flat-out refuse to focus on anything close. We never fixed this — despite it being even worse on newer iPhones. We have a good reason for that.

The reason for this is a little cheat Apple pulls. The telephoto lens actually can’t focus that close. You’d never know, because the built-in app quickly switches out the regular main camera’s view instead, cropped in to the same zoom as the telephoto lens. Apple actually does this in other situations too, like when there is insufficient light for the smaller sensor in the telephoto lens.

I believe that for most users, this kind of quick, automatic lens switching provides a seamless, excellent experience. Apple clearly has a vision for the camera: it is a single device that weaves all lenses and hardware on the rear into one simple camera the user interacts with.

This starts being a bit less magical and more frustrating as a more demanding user. Apple seems to have some level of awareness about this: the camera automatically switching to the macro-capable lens led to some frustration and confusion when announced, forcing them to add a setting that toggles this auto-switching behavior to the camera app.

While that solves the macro-auto switch for more demanding users, the iPhone 14 Pro still has a bad habit of not switching to the telephoto lens in a timely fashion unless you use a third party camera app. This combines very poorly with the telephoto lens now having a 3× zoom factor rather than the previous 2×; the resulting cropped image is a smudgy, low-detail mess.

Even with ProRAW capture being enabled, the camera app will still yield surprise-cropped shots that you assumed were telephoto camera captures.

On the left is an image captured while in ‘3×’ in Apple Camera with ProRAW enabled, with the right image taken a few seconds later. The left image is actually a crop of the 1× camera, despite the app claiming I was using the telephoto lens. It took several seconds for it to finally switch to the actual camera.

This problem is by no means limited to iPhone: even the latest and greatest Pixel 7 seems to have this behavioral issue, which shows that no matter how ‘smart’ computational photography gets, its cleverness will always be a possibly annoying frustration when it makes the wrong decisions.

Don’t get me wrong: I think most of these frustrations with the automatic choices that Apple makes for you are a photographer’s frustrations. Average users are almost always benefitting from automatic, mostly-great creative choices being made on their behalf. Third-party camera apps (like ours) can step in for most of these frustrations to allow for things like less processed images, 48 megapixel captures and more — but sadly, are also beholden to frustrating limitations, like a lack of Night mode.

I would encourage tech press and reviewers to start treating image processing and camera intelligence as much as a megapixel or lens aperture bump in their reviews; it is affecting the image a whole lot more than we give it credit for.

Conclusion

I typically review the new iPhones by looking at their general performance as a camera. It’s often disingenuous to compare an iPhone’s new camera to that of the iPhone that came a mere year before it; the small steps made can be more or less significant, but we forget that most people don’t upgrade their phones every year. What matters is how the camera truly performs when used.

This year is a bit different.

I am reminded of a post in which John Gruber writes about a 1991 Radio Shack ad (referencing this 2014 post by Steve Cichon). On this back-of-the-newspaper ad, Radioshack advertised 15 interesting gadgets for sale. Of these 15 devices, 13 are now always in your pocket — including the still camera.

While this is true to an extent, the camera on a phone will simply never be as good as the large sensors and lenses of big cameras. This is a matter of physics. For most, it is approaching a level of ‘good enough’. What’s good enough? To truly be good enough, it has to capture reality to a certain standard.

One of the greatest limitations I ran into as a photographer with the iPhone was simply their reproduction or reality at scale. iPhone photos looked good on an iPhone screen; printed out or when seen on even an iPad-sized display, their lack of detail and resolution made them an obvious cellphone capture. Beyond that, it was a feeling; a certain, unmistakable rendering that just didn’t look right.

With iPhone 14 Pro, we’re entering the 48 megapixel era of iPhone photography. If it was mere detail or pixels, it would mark a step towards the cameras from the Radio Shack ad.

But what Apple has delivered in the iPhone 14 Pro is a camera that performs in all ways closer to a ‘proper’ camera than any phone ever has. At times, it can capture images that truly render unlike a phone camera — instead, they are what I would consider a real photo, not from a phone, but from a camera.

That’s a huge leap for all of us with an iPhone in our pocket.