You may think the e in 17e stands for economy or essential, but I think it stands for entry. Sure, it doesn't have all the bells and whistles, but you get access to vibrant ecosystem of third-party apps, and now your friends will stop mocking you for green text bubbles.

This as the first iPhone you buy a teenager when they've had enough of the hand-me-downs. This is the phone that introduces you to the Apple ecosystem if you're coming from Android. The assignment sounds simple, but it's really hard to strike the balance between "budget" and "cheap."

First came the iPhone 5C in 2013. It was the last-generation iPhone repackaged it in a cute plastic shell, with $100 off. It wasn't a flop, but it wasn't exactly a hit. Maybe because it was half the speed of the new iPhone, or maybe its distinct style told everyone you had the "cheap" iPhone.

Contrast this with the iPhone XR in 2018, which was so successful it outsold the flagship iPhone XS and XS Max.

The XR had the same A12 processor as the iPhone XS, but was $250 cheaper. It cut costs by losing a camera, swapping aluminum for stainless steel, and an LCD for OLED. But thanks to the screen, the XR actually had a better battery life than the flagship models. Whoops.

The e-series is the XR's spiritual successor. It features the same chipset of the flagship model. Aesthetically, you might mistake an e for a flagship model, until you get to the camera, the focus of this post.

The top criticism of the 16e was its lack of MagSafe, which is rectified with the 17e. Because an iPhone without MagSafe is like a Porsche without power windows. Apple has also doubled the storage, which we'd call that a minor spec bump in normal times, but quite impressive in the midst of an AI-driven storage crisis.

Same Camera, New Year

The cameras are unchanged, which isn't a problem. Last year we left a glowing review for the 16e system, and no need to do it again. Instead we'll revisit a lingering question, which reviewers can't answer in few days of testing: will you miss an ultra-wide lens?

Between testing the iPhone Air last September, and now the 17e, we finally have an answer: yes, it's a little annoying, but not for the reason you think.

An iPhone ultra-wide camera doubles as a macro lens, allowing your camera to focus as little as 2 centimeters from your subject. You may think, "I don't need bug photos," or "I hear Halide's awesome neural macro feature is almost as good," but we now live in a world where cameras serve more than art, but utility. We live in a world of QR codes.

Without the macro capabilities of the iPhone ultra-wide camera, you must hold your phone at least seven inches away from QR codes, which feels awkward sitting a restaurant table. It's even more annoying with smaller QR codes.

Aside from that… the lack of the ultra-wide is fine. If you need to capture that epic landscape, the built-in camera app has you covered with a panorama mode. Speaking of built-in camera software…

Next Generation Portraits

Thanks to the A19 processor, the e-series now supports Apple's "next generation portrait mode." To understand the significance, let's dig a bit deeper into how portrait mode works.

Due to the tiny size of iPhone sensors, it's physically impossible to create the beautiful background blur you see in big, dedicated cameras.

Ten years ago, Apple introduced portrait mode on the iPhone 7 Plus to simulate this bokeh. While we take it for granted today, this computation photography was revolutionary for a phone. While blurring is easy, the tricky part is knowing which parts to blur based on their distance. The heart of portrait mode is depth-mapping.

An example Depth Map effect via Wikipedia

The first version worked by capturing two photos from the two rear cameras. Then an algorithm compared the slight differences in viewing angle to guess the depth of objects, similar to how human depth perception works.

Unfortunately, this dual-camera approach is pretty low resolution, which means it can't make out fine details, like hair. So Apple introduced an AI step that isolates the humans from the foreground.

iPhone AI creates a mask for Ethan in the photo.

Unfortunately, dual-camera depth requires a lot of resources. Have you noticed Apple's own camera app disables its automatic portrait mode when you enable ProRAW? Apparently it's just too demanding. It's a shame, because RAWs allow incredible flexibility when editing photos.

Infrared and LiDAR Depth

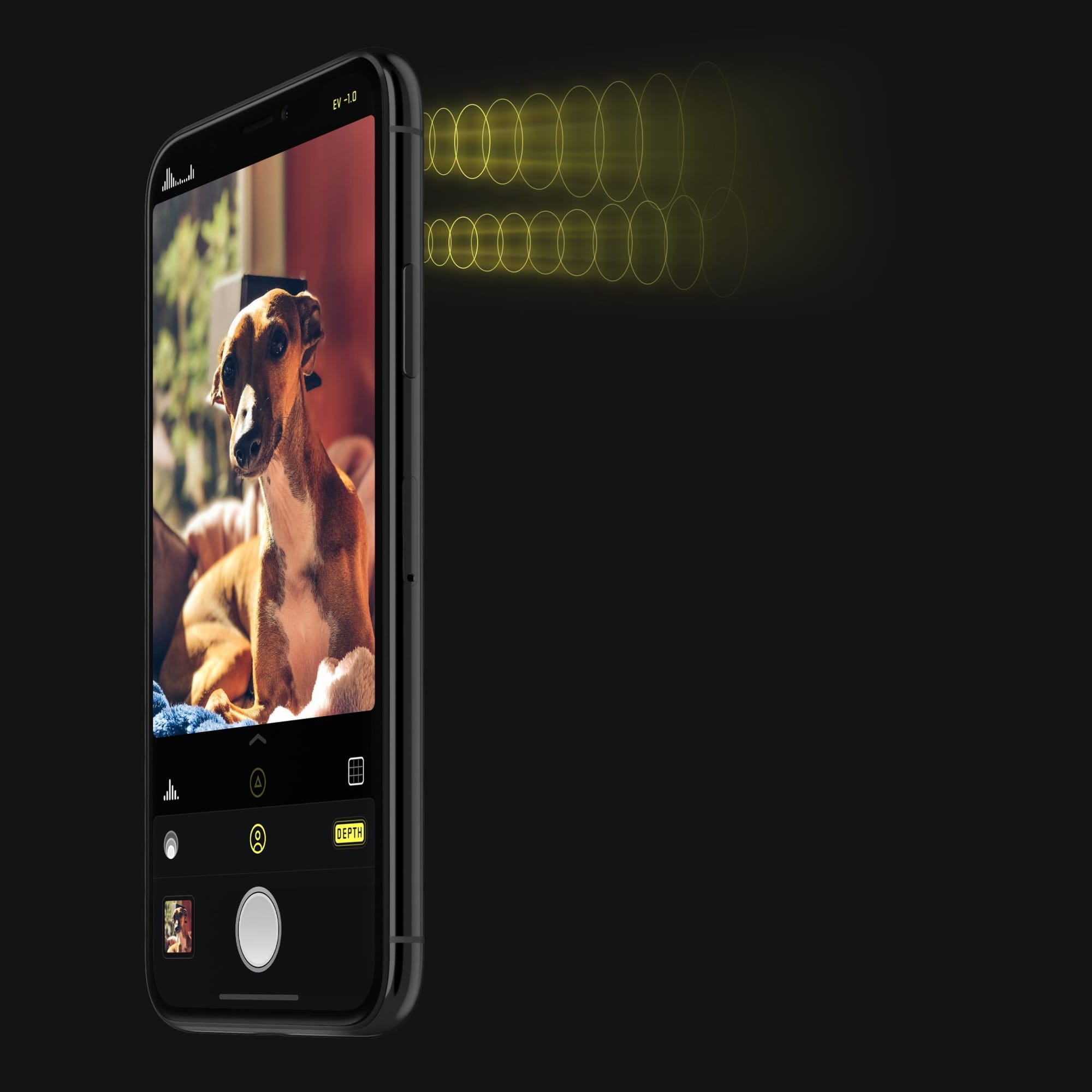

With the iPhone X, Apple added a system to unlock your phone with your face. Three years later, Apple launched the iPhone 12 Pro with a rear facing LiDAR sensor. Both of these systems project infrared dots to generate a 3d representation using… algorithms.

While these sensors are more accurate around relative distances, they're still pretty low resolution. You still need an AI pass to bring out fine details.

While LiDAR is awesome, it's limited to the iPhone Pro. So what is a LiDAR-less, single camera iPhone to do?

Single Camera Depth

The iPhone XR pulled off portrait mode with a single camera using the sensor's "focus-pixels." We already described it in our writeup years ago, but this is similar to the dual-camera approach, but if both cameras sat on your sensor. It's a neat idea, but even less accurate than what came before.

The iPhone SE2, launched in 2020, changed everything. Rather than use Focus Pixels, it generated a depth map entirely through AI. It even worked if you took a photo of a photo. It powers the 16e, and now the 17e.

So why explain all of that? The "next generation portraits" features two new features: the ability to capture dogs and cats, and the ability to change focus after the fact. Both of those features were introduced with the iPhone 15 series. I would bet they're running the exact same AI built for the 15 series.

So the big question is how well AI generated depth compares to a dual-camera or LiDAR? So good that we're adopting the same approach in Halide.

Portrait Mode on the 17e vs Halide's upcoming portrait support. I have no idea when the latter will be ready.

When LiDAR isn't available, we're using AI driven depth, and ditching the dual-camera technique. This enables us to support RAW capture, which powers Process Zero and other upcoming looks.

Editor's Note: For anyone about to write in, "I thought Halide about AI-free photography!" Sir, this is an iPhone Review. We'll dig into the AI photography conundrum when the feature launches. That said, our depth mode has always used that AI-driven human masking to work right. People love the feature, so we can't put the genie back in the bottle. Ironically, by using AI to generate the depth data, we can generate more authentic portraits with Process Zero.

The One Flaw

Much like the baseline iPhone 17, the 17e does not let you capture Apple Log or ProRAW, formats loved by filmmakers and photographers who want to make the most their images. From a purely technical perspective, their absence is odd.

ProRAW launched alongside the iPhone 12 Pro, and it made sense that these files demanded the most powerful phones. At the time, the baseline iPhone had 4GB of memory. The bit-depth and computational photography probably required a device with 6GB of memory. Similarly, Apple Log launched when baseline models had 6GB of memory, while the iPhone 15 Pro had 8GB.

The 17e has 8GB of memory, the same at the 15 Pro. It has an even faster processor. Keep in mind, I didn't develop the algorithms that power these formats, so maybe I'm missing something, but from the outside I don't see any technical reason the 17e can't output Apple Log or ProRAW.

An obvious explanation is that Apple wants to lock them away on their pricier iPhones— and that's totally fair! Apple built the software, so they can sell it however they want, but I think this decision actually hurts Apple overall. Customers can find a cheaper phone with more cameras and more powerful software: a used iPhone 15 Pro.

A quick search on Amazon turns up a refurbished 256GB iPhone 15 Pro model for $440. For another $120 at an Apple Store, you can swap out a new battery, coming in at $560 total. That's $40 less than a 17e, with ProRAW and Apple Log. Plus, titanium is awesome.

It speaks to Apple's odd position. Every year we see iterative improvements to iPhone hardware, but we've reached a point of diminished returns. Apple is full of great engineers, but they're still beholden to the laws of physics. Unlike a dedicated, big camera, iPhones need strike a balance between image quality and comfort of carrying the device in your pocket.

The entire thesis of computational photography is overcoming hardware limitations through software. Yet Apple has a history of giving away consumer software to help sell hardware. They give away their iWork suite when Microsoft charges for office, although dynamic is changing with Apple Creator Studio; iWork is still free, but AI features require a subscription.

If Apple wants to increase revenue through software, cloud-driven features make perfect sense. They're even non-transferrable! However, arbitrarily locking ProRAW and Apple Log to specific models will just improve used iPhone sales, where Apple doesn't get a cut. It's cutting off the nose to spite the face.

In a way, Apple is a victim of their own high standards. They build devices that last. Unfortunately, this leaves them in a position where the worst threat to an iPhone is another iPhone.